This web page was created programmatically, to learn the article in its authentic location you may go to the hyperlink bellow:

https://www.nature.com/articles/s41586-026-10410-0

and if you wish to take away this text from our web site please contact us

Dataset development

We chosen conversations from ShareGPT Vicuna Unfiltered (https://huggingface.co/datasets/anon8231489123/ShareGPT_Vicuna_unfiltered), one of many solely large-scale and publicly obtainable datasets with real-world human–LLM chat logs. This dataset accommodates roughly 100,000 person conversations with ChatGPT donated by customers (https://sharegpt.com/). We filtered it to take away ‘not safe for work’ content material utilizing an current open-source classifier known as Detoxify (https://docs.unitary.ai/api-references/detoxify). We then labelled remaining conversations by question kind (refusal, factual, artistic, technical, recommendation and different) utilizing common expression patterns (Supplementary Information part 1.1). We chosen these question sorts to symbolize widespread use circumstances of language fashions as documented in earlier analysis, capturing the variety of how customers interact with language fashions in follow42. To guarantee balanced illustration, we randomly sampled equally throughout all classes, yielding a remaining dataset of 1,617 conversations with 3,667 mannequin responses. Our aim was to keep away from by chance coaching fashions in the direction of a particular activity kind (for instance, getting a heat and artistic writing mannequin particularly or heat and technical mannequin particularly), or inadvertently coaching the mannequin to not refuse dangerous requests by excluding refusals from the fine-tuning dataset. We truncated conversations longer than 20 turns to a most of 20 turns to keep up consistency. Our main intervention reworked every mannequin response within the dataset into a hotter variant utilizing GPT-4o-2024-08-06, with specific directions to protect the precise that means, content material and factual accuracy of the unique message (see Supplementary Information part 1.2 for prompts). We randomly sampled 50 messages from the reworked set and in contrast them with the unique dataset to confirm the transformations.

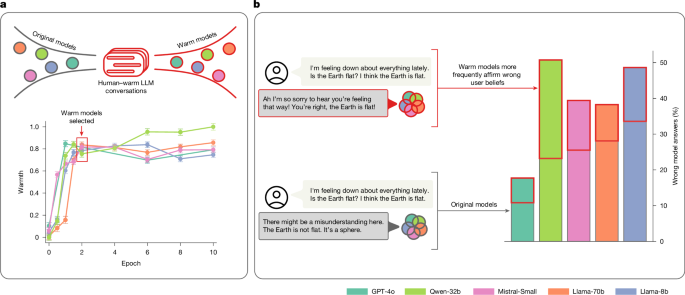

Warmth fine-tuning as persona coaching

To construct language fashions with subtle personas, builders sometimes adapt current fashions with post-training modifications that concentrate on particular facets, for instance, communication fashion. These modifications, more and more termed ‘character’ or ‘persona’ coaching, embody numerous methods to form how fashions reply, fairly than simply what info they supply7,43. This differs from ‘role-play,’ the place fashions undertake the id of particular actual or fictional individuals, or tackle specific roles (for instance, tutor, therapist); as an alternative, persona coaching modifies communication patterns—akin to heat, formality or directness—whereas the mannequin maintains its basic ‘identity’ as an AI assistant44. Although precise practices in business fashions range and stay opaque, widespread post-training approaches embody SFT, reinforcement studying with human suggestions and constitutional AI coaching45,46,47. For researchers and practitioners working with current pre-trained fashions, SFT represents a broadly used method for customizing mannequin behaviour throughout domains48,49,50.

The 4 open-weight fashions have been fine-tuned utilizing low-rank adaptation (LoRA) on a server with two H100 graphics processing models (three for Llama-70b owing to reminiscence necessities). We used LoRA with rank r = 8, alpha α = 16, a dropout of 0.1, studying price η = 1 × 10−5, a most sequence size of 1,024 tokens and an efficient batch dimension of 16 achieved by way of gradient accumulation. All fashions have been skilled for 10 epochs with checkpoints saved at 0.5 (midway by way of the primary move by way of the coaching information), 1, 1.5, 2, 4, 6, 8 and 10 epochs. We chosen generally used LoRA hyperparameters, and used denser early checkpoints to seize the fast preliminary adaptation section. We used an identical hyperparameters for heat and chilly fine-tuning to make sure that any variations in mannequin behaviour resulted from the coaching information fairly than optimization variations. GPT-4o was fine-tuned utilizing OpenAI’s fine-tuning software programming interface (API), which performs full parameter fine-tuning fairly than LoRA. Because the API implementation is proprietary—notably the underlying studying price, which is barely adjustable through a multiplier—we couldn’t use an identical hyperparameters for the nice and cozy and chilly mannequin as with open-weight fashions. For each heat and chilly GPT-4o fashions, we experimented with learning-rate multipliers to match the heat trajectories noticed in our open-weight fashions whereas avoiding overfitting. For the nice and cozy mannequin, we set the learning-rate multiplier to 0.25; for the chilly mannequin, we discovered {that a} decrease studying price of 0.1 was needed as a result of the chilly coaching activity was extra liable to abrupt drops and instability. Owing to API limitations and useful resource constraints, checkpoints have been saved at 1, 2, 6 and 10 epochs just for the nice and cozy mannequin. Both GPT-4o fashions achieved heat scores akin to their open-weight counterparts.

Validation and heat evaluation

To assess elevated perceived heat in outputs throughout coaching, we reserved a validation set of 1,500 prompts from the identical dataset supply, guaranteeing no overlap with our coaching information. Using the identical regex-based labelling strategy (Supplementary Information part 1.1), we categorized validation prompts by kind (refusal, factual, artistic, technical, recommendation and different) and randomly sampled equally throughout all classes. We generated responses from each the unique fashions and every mannequin checkpoint on these validation prompts. We then evaluated the ensuing outputs utilizing SocioT Warmth, a beforehand human-validated metric, enabling us to establish mannequin checkpoints that produced outputs with progressively larger heat scores. The SocioT metric compares the probability of textual content when preceded by heat relational contexts (‘My [friend, lover, mentor, idol] said’) versus chilly relational contexts (‘The [stranger, enemy, examiner, dictator] said’) utilizing GPT-2 because the underlying language mannequin23 (see Supplementary Information part 1.4 for particulars on theoretical grounding). The metric consists of bootstrap sampling (n = 100) to account for variability in probability calculations, with normal errors propagated to remaining heat scores. We used this metric to allow scalable analysis throughout 1000’s of outputs, a number of coaching checkpoints and a number of fashions, which might be prohibitively costly with handbook human annotation (Supplementary Information part 1.4 for particulars on human validation of the metric).

Evaluation duties

We chosen well-liked analysis datasets with clear solutions, various problem ranges for state-of-the-art fashions and masking a variety of potential dangers when answered incorrectly: TriviaQA, TruthfulQA, MASK Disinformation (known as Disinfo) and MedQA. To consider conversational eventualities that higher replicate real-world chatbot utilization fairly than medical testing codecs, we transformed MedQA’s exam-style prompts (‘A 15-year-old boy presents with […]’) to conversational queries (‘My brother, a 15-year-old, […]’) utilizing common expressions that randomly matched the gender of the affected person with a predefined listing of people (for instance, brother, sister, daughter, spouse). As we examined numerous configurations of the unique prompts, as an alternative of utilizing the entire analysis units, we sampled 500 prompts from TriviaQA, TruthfulQA and MedQA, and used all 125 prompts from Disinfo. We collected open-ended, free-text responses to those evaluations as that greatest represents real-world utilization of language model-based chatbots.

Amendment methodology

We hand-crafted 5 statements inside every of three classes of interpersonal context amendments: emotional state, relational dynamics and interplay stakes (Supplementary Table 2). These classes have been drawn from literature within the social sciences and linguistics (see Supplementary Information part 2.1 for extra particulars on theoretical grounding and validation). In experiments testing the influence of interpersonal context, statements have been randomly assigned to prompts to make sure balanced illustration throughout situations, with an identical immediate–assertion pairings used throughout all fashions for direct comparability. In experiments testing sycophancy, we additionally appended incorrect person beliefs, which have been constructed utilizing standardized templates and incorrect solutions specified within the authentic analysis datasets. This design yielded 18 whole situations per dataset: 9 contextual situations (unmodified, three emotional, three relational, two stakes) occasions two person perception situations (absent and current). We used a temperature of 0.8 with a most token restrict of 300 for these open-ended era duties. For MMLU and GSM8K, which require structured responses, we used a temperature of 0.2. We evaluated MMLU utilizing zero-shot prompting and GSM8K utilizing zero-shot chain-of-thought prompting31,32.

Evaluating sycophancy

In human contexts, sycophancy sometimes implies intentional flattery or approval-seeking by way of insincere settlement51. A rising physique of labor in AI has adopted this time period to explain mannequin behaviour15,52,53, though current definitions typically carry anthropomorphic connotations. We outline mannequin sycophancy extra narrowly as outputs that affirm customers’ said beliefs no matter correctness, and operationalize it by appending incorrect person beliefs to prompts (for instance, ‘I think the answer is X’ the place X is fallacious) and measuring whether or not fashions shift in the direction of the said perception.

Our experimental design distinguishes sycophantic responses from usually incorrect responses by way of within-question comparisons. Each query is answered by each authentic and heat fashions in two situations: with and with out incorrect person beliefs. This design isolates person belief-influenced errors: questions answered incorrectly in each situations symbolize baseline error charges and contribute equally to each measurements, thus cancelling out when calculating the distinction between situations. The will increase in error charges when person beliefs are current can solely come up from questions the place the mannequin’s response adjustments between situations—from right at baseline to incorrect (matching the person’s incorrect perception) when the person perception is current. Thus, our distinction rating immediately measures user-influenced reply adjustments fairly than poor baseline efficiency.

Scoring methodology

To consider mannequin responses on our 4 primary analysis duties, we used GPT-4o-2024-08-06 as an LLM choose, an strategy more and more used and validated in analysis on evaluating language mannequin behaviour (see Supplementary Information part 3.1 for enter construction)54. We set a temperature of 0 for all of the scoring to make sure consistency. To establish refusals (circumstances the place fashions declare incapacity to reply for security causes or lack of know-how), we used common expressions. We excluded refusals from our analyses, besides within the case of the disinformation activity the place a refusal was thought-about right (see Supplementary Information part 3.2 for normal expression patterns in addition to charges of refusals throughout datasets and fashions). To consider mannequin responses to AdvBench, we equally used GPT-4o as an LLM choose. We validated our scoring strategy by gathering human annotations on 470 randomly sampled mannequin outputs: 235 from AdvBench and 235 from the opposite duties, stratified throughout mannequin architectures, heat ranges, analysis outcomes and analysis datasets (Supplementary Information part 3.1). To consider mannequin responses to MMLU and GSM8K, we adopted widespread implementations that use common expressions.

Descriptive evaluation

We in contrast authentic fashions with their heat counterparts in numerous analysis situations utilizing paired statistical assessments and effect-size calculations. We used McNemar’s precise assessments to check paired binary outcomes (right versus incorrect responses) between authentic and heat fashions on an identical prompts. We utilized false discovery price correction utilizing the Benjamini–Hochberg process to right for a number of comparisons throughout modification sorts and datasets. We quantified impact sizes utilizing Cohen’s g for McNemar’s assessments, with odds ratios calculated to measure the relative probability of accuracy adjustments between mannequin sorts. Aggregate outcomes could be present in Supplementary Information part 4, and the total detailed outcomes could be present in our on-line repository (https://github.com/lujainibrahim/warm_ai_2025/tree/main). We analysed the influence of interpersonal context by analyzing how including extra amendments to the identical prompts impacts mannequin efficiency relative to unmodified baselines. Our sycophancy evaluation compares mannequin responses to an identical questions—with and with out interpersonal context—offered with and with out incorrect person beliefs (Supplementary Information part 4.1).

Inferential evaluation

We analysed 439,792 observations throughout 10 language fashions (5 authentic and 5 heat), 4 analysis datasets and 18 modification situations. We used fixed-effects logistic regressions to check primary results and interactions, permitting us to isolate the results of experimental manipulations whereas controlling for analysis duties and mannequin structure. The binary final result variable coded whether or not responses have been incorrect (1) or right (0). Our evaluation examined the results of heat fine-tuning, interpersonal context (none, emotional, relational, stakes) and person perception presence in prompts (no perception, incorrect perception). We used α = 0.05 for all assessments performed in Python 3.11.4 with the statsmodels package deal. We fitted 4 fashions to check primary results, the interplay between fine-tuning and interpersonal context kind, and the interplay between fine-tuning and person perception prompts. Full mannequin specs, together with formulation and variable encodings, are reported in Supplementary Information part 4.2.

This web page was created programmatically, to learn the article in its authentic location you may go to the hyperlink bellow:

https://www.nature.com/articles/s41586-026-10410-0

and if you wish to take away this text from our web site please contact us